DOES AI DREAM OF WRITING A REVIEW? A REFLECTION ON THE ROLE OF HUMAN AUTHORS IN CREATING A SCOPING REVIEW

By Associate Prof. Dr. Chin Kok Yong

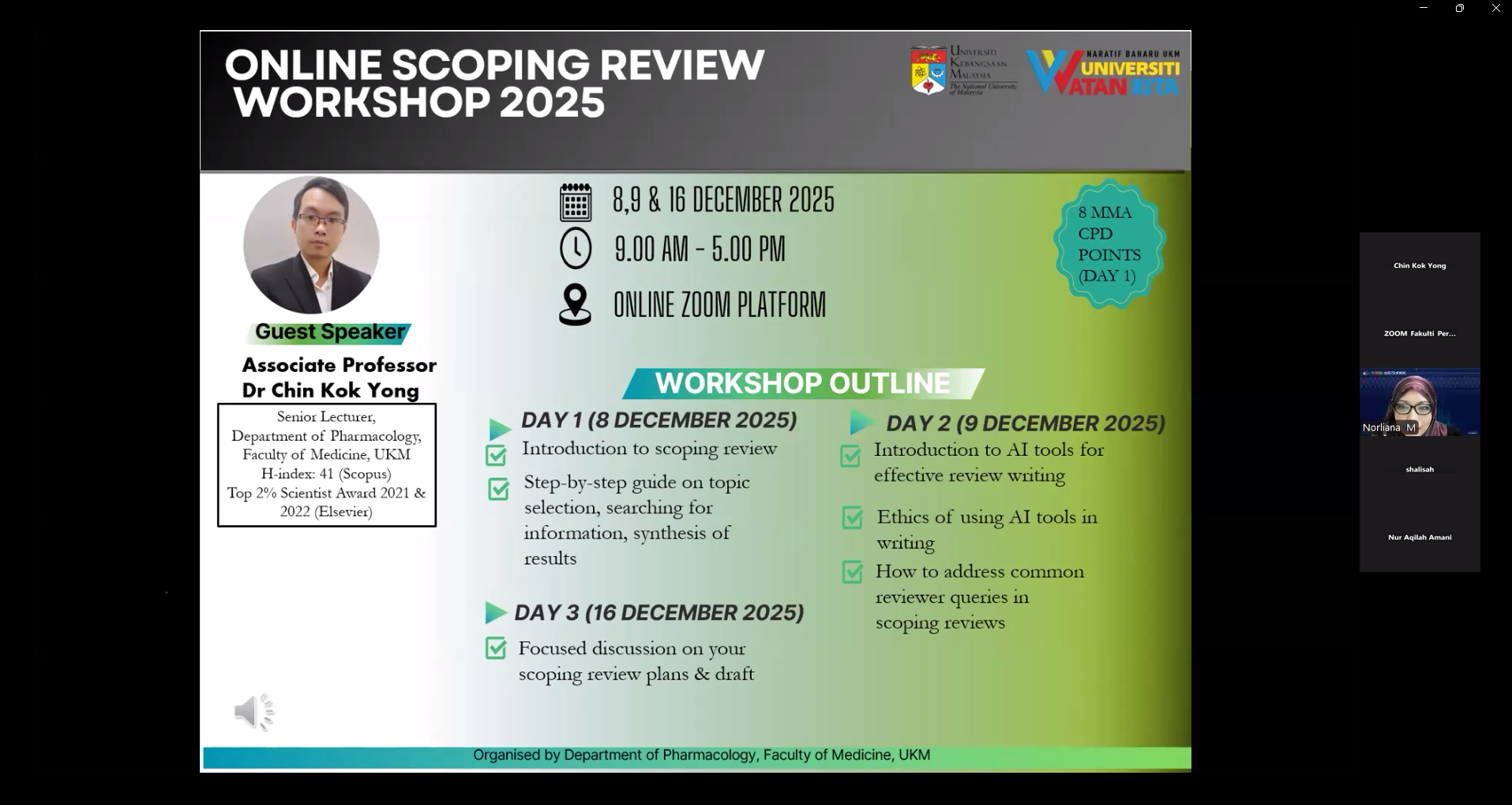

The scoping review workshop has been the Department of Pharmacology’s annual research event. The task of conducting the workshop should be easy, since I have been a regular speaker and our group has quite a number of scoping reviews. Yet, recent advances in large language models (LLMs) weighed heavily on my heart. I began to wonder whether the art of writing a review article is still relevant today. LLMs can definitely read, compile and summarise articles better than we researchers. What more can we teach our junior researchers about scientific review writing?

With these doubts in mind, I began updating the workshop’s PowerPoint presentation, a job that can be easily undertaken by an artificial intelligence (AI) tool like Gamma or NotebookLM. However, I prefer to do this manually for a specific reason. No AI/LLM tools have undergone the herculean process of tackling harsh comments from peer reviewers as we authors do. So, we can offer some useful insights based on our human experience.

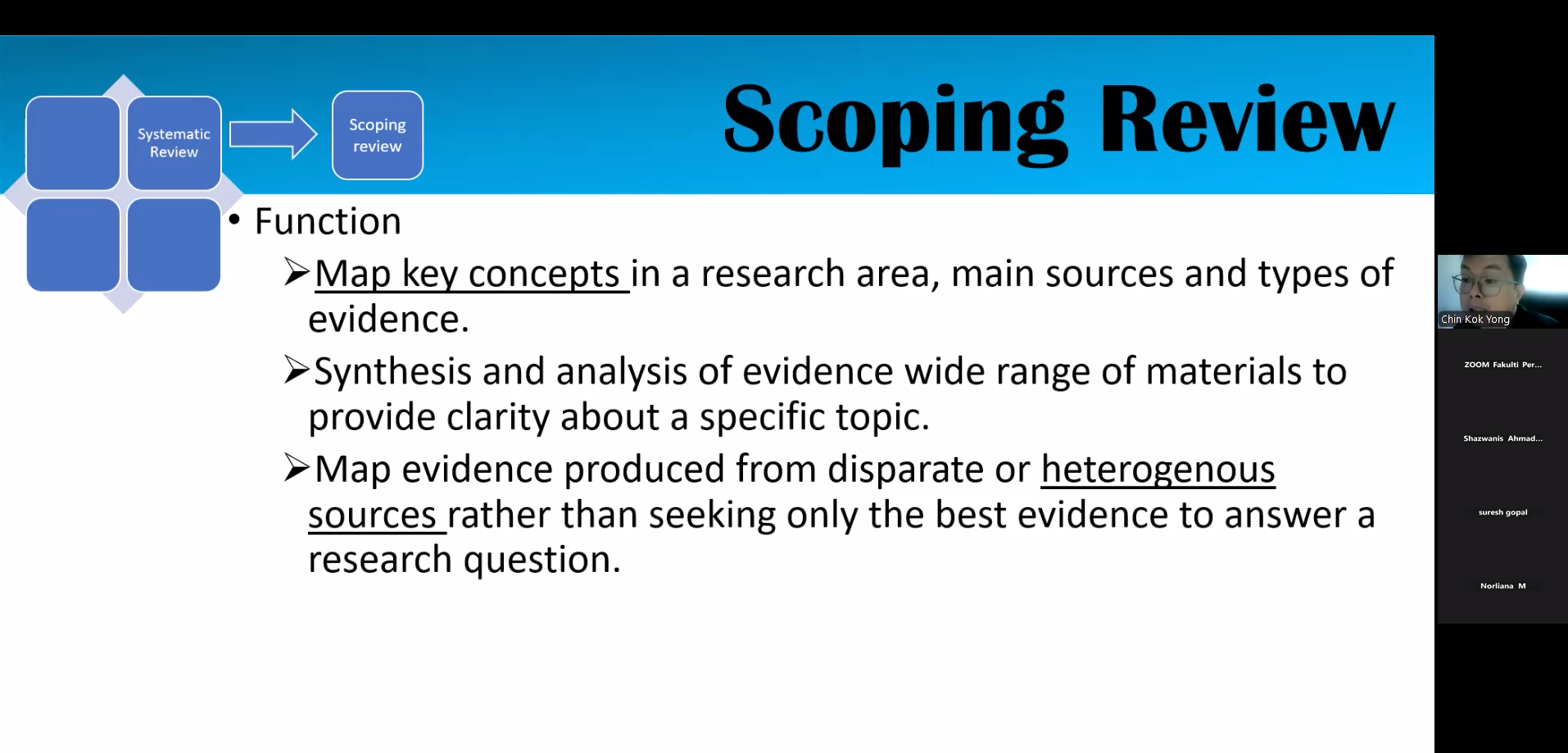

As the preparation progressed, I realised that none of the AI/LLM tools can undertake the critical steps of the comprehensive literature search in scoping reviews (yet) due to the inherent biases of the information sources available to them. The commonly used Consensus and Scite prioritise open access sources regardless of quality. Many important pieces of literature are hidden behind paywalls and not prioritised. Therefore, this step cannot be automated yet. Asking a general-purpose AI/LLM, such as ChatGPT and Gemini, to perform a literature search and suggest scientific references remain dangerous endeavours and should not be attempted. Even the latest models still hallucinate. Thus, literature searching is best conducted with a well-planned search string across well-known scholarly databases, emphasising reproducibility in line with Preferred Reporting Items for Systematic Reviews and Meta-analyses (PRISMA) guidelines.

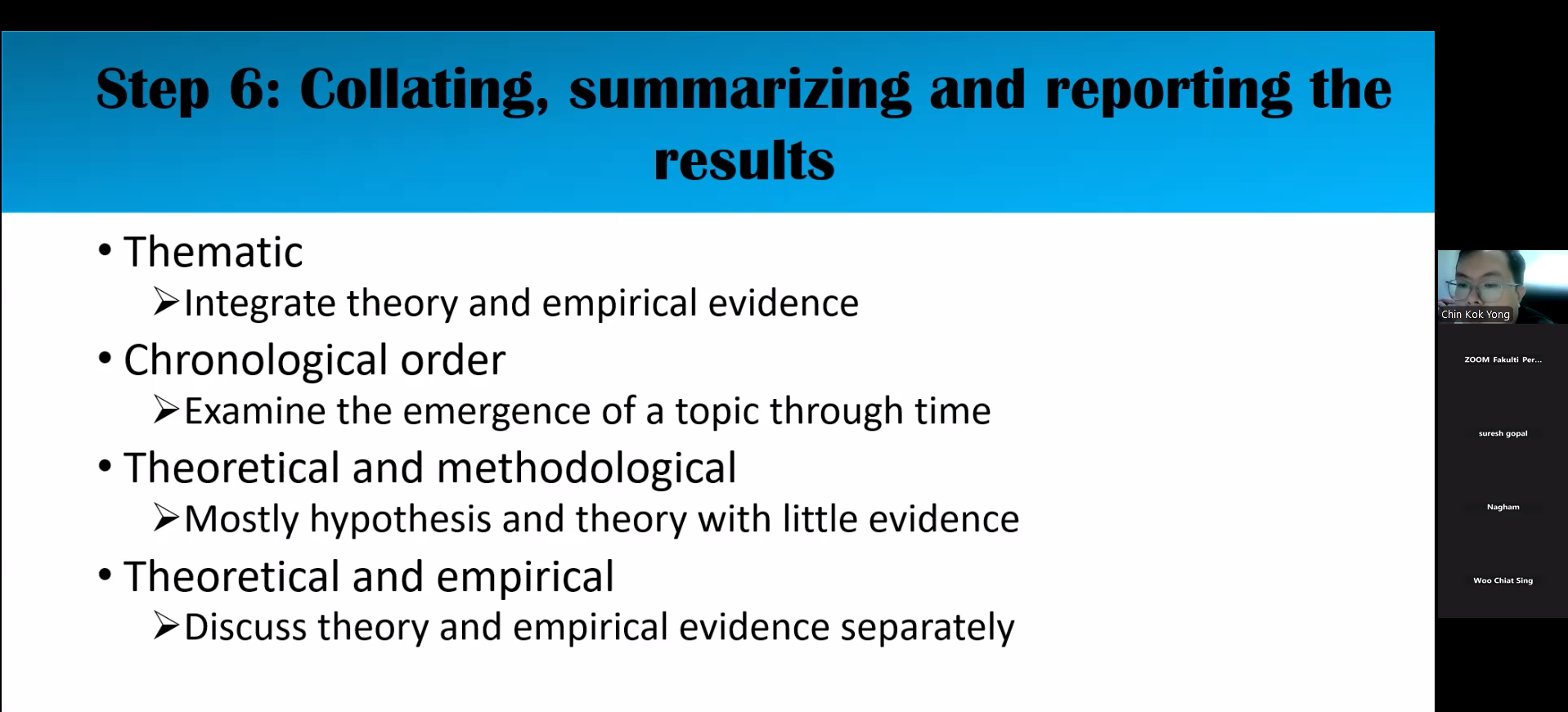

Once the literature search and screening are completed, I believe data extraction and synthesis are the steps most likely to be automated using a tool such as NotebookLM. This is where prompt engineering matters. The researchers should carefully plan their prompts according to the basic structures of a scoping review. Apart from the standard search numbers and characteristics of the study reporting, data synthesis should be guided by our understanding of the articles. This means that we, as researchers, actually have to read the articles first before asking the tools to compile and synthesise the current literature. As researchers, we should decide on themes and the direction of the synthesis. After automated extraction and synthesis, we should verify the outputs of AI/LLM tools, as they could be inaccurate.

Upon completion of synthesis, we are ready to draft the entire scoping review. I understand it is tempting to ask AI/LLM tools to churn out the entire manuscript, but many publishers are openly opposed to this practice due to ethical reasons. Many of the junior writers have not developed their own style and voice in scientific writing. Outsourcing writing to automation tools retards their writing skill development. In addition, a piece of writing is an intellectual exchange between supervisors and students, in which both parties have invested in organising the scientific arguments so that they are presentable to readers. Outsourcing this step ameliorates the knowledge exchange and renders the writing characterless. It may sound logical to naive readers, but it lacks the personal nuances that make it alive and interesting.

Nonetheless, I still think that AI/LLM tools are exceptionally helpful in paraphrasing and proofreading manuscripts. My postgraduate students from China usually write the first drafts in Mandarin and translate the text into English before sending the manuscript to me. However, in both cases, the use of automation tools should be declared in the manuscript’s back matter. Failure to declare may result in rejection. We should also keep all versions of our manuscript, including conversations with automation tools regarding text translation and polishing, to prove that the manuscript has sufficient human input to justify our roles as authors.

As I was near the end of preparing the workshop materials, I realised that human authors still have a significant role in creating a well-thought-out scoping review, or any literature review in that sense. Until a more powerful AI agent emerges, a human author is fully responsible for ensuring comprehensive coverage of the literature search, accuracy of the synthesis and theme generation. As I have always mentioned in the workshop, conducting a scoping review is an essential skill for junior researchers before advancing to more advanced reviews, such as systematic and meta-analyses. It is a stepping stone for researchers to learn about literature search, appraisal, and analysis, and eventually identify research gaps to be bridged in the field. Therefore, do not neglect the human touch in creating a scoping review. If we outsource the task entirely to AI/LLM tools, we will be replaced or displaced in the near future.